Documentation Index

Fetch the complete documentation index at: https://docs.quickblox.com/llms.txt

Use this file to discover all available pages before exploring further.

Mute audio

Mute the audio by calling localMediaStream.audioTrack.enabled() method. Using this method, we can tell SDK to send/not send audio data either from a local or remote peer in the specified call session.

self.session?.localMediaStream.audioTrack.enabled = !self.session?.localMediaStream.audioTrack.enabled

self.session.localMediaStream.audioTrack.enabled ^= 1;

Mute remote audio

You can always get remote audio tracks for a specific user ID in the call using the below-specified QBRTCSession methods (assuming that they are existent).

let remoteAudioTrack = self.session?.remoteAudioTrack(withUserID: 24450) // audio track for user 24450

QBRTCAudioTrack *remoteAudioTrack = [self.session remoteAudioTrackWithUserID:@(24450)]; // audio track for user 24450

remoteAudioTrack.enabled = false

remoteAudioTrack.enabled = NO;

Disable video

Turn off/turn on the video by calling localMediaStream.videoTrack.enabled(). Using this method, we can tell SDK not to send video data either from a local or remote peer in the specified call session.

self.session?.localMediaStream.videoTrack.enabled = !self.session?.localMediaStream.videoTrack.enabled

self.session.localMediaStream.videoTrack.enabled ^=1;

Due to webrtc restrictions black frames will be placed into stream content if video is disabled. Switch camera

You can switch the video camera during a call. (Default: front camera)

// to change some time after, for example, at the moment of call

let position = self.videoCapture?.position

let newPosition = position == AVCaptureDevice.Position.Front ? AVCaptureDevice.Position.Back : AVCaptureDevice.Position.Front

// check whether videoCapture has or has not camera position

// for example, some iPods do not have front camera

if self.videoCapture?.hasCameraForPosition(newPosition) {

self.videoCapture?.position = newPosition

}

// to change some time after, for example, at the moment of call

AVCaptureDevicePosition position = self.videoCapture.position;

AVCaptureDevicePosition newPosition = position == AVCaptureDevicePositionBack ? AVCaptureDevicePositionFront : AVCaptureDevicePositionBack;

// check whether videoCapture has or has not camera position

// for example, some iPods do not have front camera

if ([self.videoCapture hasCameraForPosition:newPosition]) {

self.videoCapture.position = newPosition;

}

Manage audio session

QuickbloxWebRTC has its own audio session management which you need to use. It’s located in the QBRTCAudioSession class. This class is represented as singleton and you can always access a shared session by calling the instance() method.

let audioSession = QBRTCAudioSession.instance()

QBRTCAudioSession *audioSession = [QBRTCAudioSession instance];

QBRTCAudioSession class header for more information.

You can configure an audio session using the setConfiguration() method.

let audioSession = QBRTCAudioSession.instance()

let configuration = QBRTCAudioSessionConfiguration()

configuration.categoryOptions.insert(.duckOthers)

// adding blutetooth support

configuration.categoryOptions.insert(.allowBluetooth)

configuration.categoryOptions.insert(.allowBluetoothA2DP)

// adding airplay support

configuration.categoryOptions.insert(.allowAirPlay)

if hasVideo == true {

// setting mode to video chat to enable airplay audio and speaker only

configuration.mode = AVAudioSession.Mode.videoChat.rawValue

}

audioSession.setConfiguration(configuration)

QBRTCAudioSession *audioSession = [QBRTCAudioSession instance];

QBRTCAudioSessionConfiguration *configuration = [[QBRTCAudioSessionConfiguration alloc] init];

configuration.categoryOptions |= AVAudioSessionCategoryOptionDuckOthers;

// adding blutetooth support

configuration.categoryOptions |= AVAudioSessionCategoryOptionAllowBluetooth;

configuration.categoryOptions |= AVAudioSessionCategoryOptionAllowBluetoothA2DP;

// adding airplay support

configuration.categoryOptions |= AVAudioSessionCategoryOptionAllowAirPlay;

if (hasVideo) {

configuration.mode = AVAudioSessionModeVideoChat;

}

[audioSession setConfiguration:configuration];

setConfiguration() method accepts the configuration argument of the object type with the following fields:

| Field | Required | Description |

|---|

| categoryOptions | no | Audio session category options allow to tailor the behavior of the active audio session category. Default: allowBluetooth. See Apple documentation to learn about supported values. |

| mode | no | Audio session mode allows to assign specialized behavior to an audio session category. Default: videoChat. See Apple documentation to learn about supported values. |

Activate

Activate an audio session before every call.

let audioSession = QBRTCAudioSession.instance()

let isActive = true

audioSession.setActive(isActive)

// or activate with configuration

let configuration = QBRTCAudioSessionConfiguration()

audioSession.setConfiguration(configuration, active: isActive)

QBRTCAudioSession *audioSession = [QBRTCAudioSession instance];

BOOL isActive = YES;

[audioSession setActive:isActive];

// or activate with configuration

QBRTCAudioSessionConfiguration *configuration = [[QBRTCAudioSessionConfiguration alloc] init];

[audioSession setConfiguration:configuration active:isActive];

setActive(), method accepts the following argument:

| Agrument | Required | Description |

|---|

| isActive | yes | Boolean paramater. Set the true to activate an audio session. |

setConfiguration() accepts the following arguments:

| Argument | Required | Description |

|---|

| configuration | yes | Audio session configuration object. You can set the configuration object fields. See this section to learn how to set audio session configuration. |

| isActive | yes | Boolean paramater. Set the true to activate an audio session. |

Deactivate

Deactivate an audio session after the call ends.

let isActive = false

QBRTCAudioSession.instance().setActive(isActive)

BOOL isActive = NO;

[QBRTCAudioSession.instance setActive:isActive];

| Argument | Required | Description |

|---|

| isActive | yes | Boolean paramater. Set the true to activate an audio session. |

Set audio output

You can output audio either from the receiver unless you set the AVAudioSessionModeVideoChat mode or speaker.

let audioSession = QBRTCAudioSession.instance()

// setting audio through receiver

let receiver: AVAudioSession.PortOverride = .none

audioSession.overrideOutputAudioPort(receiver)

// setting audio through speaker

let speaker: AVAudioSession.PortOverride = .speaker

audioSession.overrideOutputAudioPort(speaker)

QBRTCAudioSession *audioSession = [QBRTCAudioSession instance];

// setting audio through receiver

AVAudioSessionPortOverride receiver = AVAudioSessionPortOverrideNone;

audioSession.overrideOutputAudioPort(receiver)

// setting audio through speaker

AVAudioSessionPortOverride speaker = AVAudioSessionPortOverrideSpeaker;

[audioSession overrideOutputAudioPort:speaker];

| Argument | Required | Descriotion |

|---|

| receiver/speaker | yes | State for the current audio category. |

Screen sharing

Screen sharing allows you to share information from your application to all of your opponents. It gives you the ability to promote your product, share a screen with formulas to students, distribute podcasts, share video/audio/photo moments of your life in real-time all over the world.

To implement this feature in your application, we give you the ability to create custom video capture.

Video capture is a base class you should inherit from in order to send frames to your opponents. There are two ways to implement this feature in your application.

Due to Apple iOS restrictions, screen sharing feature works only within the app it is used in.

if #available(iOS 11.0, *) {

self.screenCapture = QBRTCVideoCapture()

RPScreenRecorder.shared().startCapture(handler: { (sampleBuffer, type, error) in

switch type {

case .video :

let source = CMSampleBufferGetImageBuffer(sampleBuffer)

let videoFrame = QBRTCVideoFrame(pixelBuffer: source, videoRotation: ._0)

self.screenCapture.adaptOutputFormat(toWidth: UInt(UIScreen.main.bounds.width), height: UInt(UIScreen.main.bounds.height), fps: 30)

self.screenCapture.send(videoFrame)

break

default:

break

}

}) { (error) in

if error != nil {

print(error)

}

}

}

self.session?.localMediaStream.videoTrack.videoCapture = self.screenCapture

if ([UIDevice currentDevice].systemVersion.integerValue >= 11) {

self.screenCapture = [[QBRTCVideoCapture alloc] init];

[RPScreenRecorder.sharedRecorder startCaptureWithHandler:^(CMSampleBufferRef _Nonnull sampleBuffer, RPSampleBufferType bufferType, NSError * _Nullable error) {

switch (bufferType) {

case RPSampleBufferTypeVideo: {

CVPixelBufferRef pixelBuffer = CMSampleBufferGetImageBuffer(sampleBuffer);

QBRTCVideoFrame *videoFrame = [[QBRTCVideoFrame alloc] initWithPixelBuffer:buffer videoRotation:QBRTCVideoRotation_0];

[self.screenCapture adaptOutputFormatToWidth:(NSUInteger)[UIScreen mainScreen].bounds.size.width height:(NSUInteger)[UIScreen mainScreen].bounds.size.height fps:30];

[self.screenCapture sendVideoFrame:videoFrame];

break;

}

default:

break;

}

} completionHandler:^(NSError * _Nullable error) {

NSLog(@"error: %@", error);

}];

self.session.localMediaStream.videoTrack.videoCapture = self.screenCapture;

}

self.screenCapture should be a property of QBRTCVideoCapture class type.

30 fps is a maximum rate for WebRTC, even though RPScreenRecorder supports 60 fps, you must set it to 30 or lower.

QBRTCVideoCapture class allows sending frames to your opponents. By inheriting this class you are able to provide custom logic to create frames, modify them, and then send to your opponents. Below you can find an example of how to implement a custom video capture and send frames to your opponents (this class is designed to share 5 screenshots per second).

import UIKit

import QuickbloxWebRTC

struct ScreenCaptureConstant {

// * By default sending frames in screen share using BiPlanarFullRange pixel format type.

// * You can also send them using ARGB by setting this constant to NO.

static let isUseBiPlanarFormatTypeForShare = true

}

// * Class implements screen sharing and converting screenshots to destination format

// * in order to send frames to your opponents

class ScreenCapture: QBRTCVideoCapture {

//MARK: - Properties

private var view = UIView()

private var displayLink = CADisplayLink()

static let sharedGPUContextSharedContext: CIContext = {

let options = [CIContextOption.priorityRequestLow: true]

let sharedContext = CIContext(options: options)

return sharedContext

}()

//MARK: - Life Cycle

// * Initialize a video capturer view and start grabbing content of given view

init(view: UIView) {

super.init()

self.view = view

}

private func sharedContext() -> CIContext {

return ScreenCapture.sharedGPUContextSharedContext

}

//MARK: - Enter Background / Fofeground notifications

@objc func willEnterForeground(_ note: Notification?) {

displayLink.isPaused = false

}

@objc func didEnterBackground(_ note: Notification?) {

displayLink.isPaused = true

}

//MARK: - Internal Methods

func screenshot() -> UIImage? {

let layer = view.layer

UIGraphicsBeginImageContextWithOptions(layer.frame.size, true, 1);

guard let context = UIGraphicsGetCurrentContext() else {

return nil

}

layer.render(in:context)

let screenshotImage = UIGraphicsGetImageFromCurrentImageContext()

UIGraphicsEndImageContext()

return screenshotImage

}

@objc private func sendPixelBuffer(_ sender: CADisplayLink?) {

guard let image = self.screenshot() else {

return

}

videoQueue.async(execute: { [weak self] in

guard let self = self else {

return

}

let renderWidth = Int(image.size.width)

let renderHeight = Int(image.size.height)

var pixelFormatType = kCVPixelFormatType_420YpCbCr8BiPlanarFullRange

var pixelBufferAttributes = [kCVPixelBufferIOSurfacePropertiesKey: [:]] as CFDictionary

if ScreenCaptureConstant.isUseBiPlanarFormatTypeForShare == false {

pixelFormatType = kCVPixelFormatType_32ARGB

pixelBufferAttributes = [kCVPixelBufferCGImageCompatibilityKey: kCFBooleanFalse, kCVPixelBufferCGBitmapContextCompatibilityKey: kCFBooleanFalse] as CFDictionary

}

var pixelBuffer: CVPixelBuffer?

let status = CVPixelBufferCreate(kCFAllocatorDefault, renderWidth, renderHeight, pixelFormatType, pixelBufferAttributes, &pixelBuffer)

if status != kCVReturnSuccess {

return

}

guard let buffer = pixelBuffer else {

return

}

CVPixelBufferLockBaseAddress(buffer, CVPixelBufferLockFlags(rawValue: 0))

if let renderImage = CIImage(image: image),

ScreenCaptureConstant.isUseBiPlanarFormatTypeForShare == true {

self.sharedContext().render(renderImage, to: buffer)

} else if let cgImage = image.cgImage {

let pxdata = CVPixelBufferGetBaseAddress(buffer)

let rgbColorSpace = CGColorSpaceCreateDeviceRGB()

let bitmapInfo = CGBitmapInfo.byteOrder32Little.rawValue | CGImageAlphaInfo.premultipliedFirst.rawValue

let context = CGContext(data: pxdata, width: renderWidth, height: renderHeight, bitsPerComponent: 8, bytesPerRow: renderWidth * 4, space: rgbColorSpace, bitmapInfo: bitmapInfo)

let rect = CGRect(x: 0, y: 0, width: renderWidth, height: renderHeight)

context?.draw(cgImage, in: rect)

}

CVPixelBufferUnlockBaseAddress(buffer, CVPixelBufferLockFlags(rawValue: 0))

let videoFrame = QBRTCVideoFrame(pixelBuffer: buffer, videoRotation: QBRTCVideoRotation._0)

self.send(videoFrame)

})

}

// MARK: - <QBRTCVideoCapture>

override func didSet(to videoTrack: QBRTCLocalVideoTrack?) {

super.didSet(to: videoTrack)

displayLink = CADisplayLink(target: self, selector: #selector(sendPixelBuffer(_:)))

displayLink.add(to: .main, forMode: .common)

displayLink.preferredFramesPerSecond = 12 //5 fps

NotificationCenter.default.addObserver(self, selector: #selector(willEnterForeground(_:)), name: UIApplication.willEnterForegroundNotification, object: nil)

NotificationCenter.default.addObserver(self, selector: #selector(didEnterBackground(_:)), name: UIApplication.didEnterBackgroundNotification, object: nil)

}

override func didRemove(from videoTrack: QBRTCLocalVideoTrack?) {

super.didRemove(from: videoTrack)

displayLink.isPaused = true

displayLink.remove(from: .main, forMode: .common)

NotificationCenter.default.removeObserver(self, name: UIApplication.willEnterForegroundNotification, object: nil)

NotificationCenter.default.removeObserver(self, name: UIApplication.didEnterBackgroundNotification, object: nil)

}

}

#import "ScreenCapture.h"

// * By default sending frames in screen share using BiPlanarFullRange pixel format type.

// * You can also send them using ARGB by setting this constant to NO.

static const BOOL kQBRTCUseBiPlanarFormatTypeForShare = YES;

@interface ScreenCapture()

@property (weak, nonatomic) UIView * view;

@property (strong, nonatomic) CADisplayLink *displayLink;

@end

@implementation ScreenCapture

- (instancetype)initWithView:(UIView *)view {

self = [super init];

if (self) {

_view = view;

}

return self;

}

#pragma mark - Enter BG / FG notifications

- (void)willEnterForeground:(NSNotification *)note {

self.displayLink.paused = NO;

}

- (void)didEnterBackground:(NSNotification *)note {

self.displayLink.paused = YES;

}

#pragma mark -

- (UIImage *)screenshot {

UIGraphicsBeginImageContextWithOptions(_view.frame.size, YES, 1);

[_view drawViewHierarchyInRect:_view.bounds afterScreenUpdates:NO];

UIImage *image = UIGraphicsGetImageFromCurrentImageContext();

UIGraphicsEndImageContext();

return image;

}

- (CIContext *)qb_sharedGPUContext {

static CIContext *sharedContext;

static dispatch_once_t onceToken;

dispatch_once(&onceToken, ^{

NSDictionary *options = @{kCIContextPriorityRequestLow: @YES};

sharedContext = [CIContext contextWithOptions:options];

});

return sharedContext;

}

- (void)sendPixelBuffer:(CADisplayLink *)sender {

__weak __typeof(self)weakSelf = self;

dispatch_async(self.videoQueue, ^{

@autoreleasepool {

UIImage *image = [weakSelf screenshot];

int renderWidth = image.size.width;

int renderHeight = image.size.height;

CVPixelBufferRef buffer = NULL;

OSType pixelFormatType;

CFDictionaryRef pixelBufferAttributes = NULL;

if (kQBRTCUseBiPlanarFormatTypeForShare) {

pixelFormatType = kCVPixelFormatType_420YpCbCr8BiPlanarFullRange;

pixelBufferAttributes = (__bridge CFDictionaryRef) @{(__bridge NSString *)kCVPixelBufferIOSurfacePropertiesKey: @{}};

} else {

pixelFormatType = kCVPixelFormatType_32ARGB;

pixelBufferAttributes = (__bridge CFDictionaryRef) @{(NSString *)kCVPixelBufferCGImageCompatibilityKey : @NO,(NSString *)kCVPixelBufferCGBitmapContextCompatibilityKey : @NO};

}

CVReturn status = CVPixelBufferCreate(kCFAllocatorDefault, renderWidth, renderHeight, pixelFormatType, pixelBufferAttributes, &buffer);

if (status == kCVReturnSuccess && buffer != NULL) {

CVPixelBufferLockBaseAddress(buffer, 0);

if (kQBRTCUseBiPlanarFormatTypeForShare) {

CIImage *rImage = [[CIImage alloc] initWithImage:image];

[weakSelf.qb_sharedGPUContext render:rImage toCVPixelBuffer:buffer];

} else {

void *pxdata = CVPixelBufferGetBaseAddress(buffer);

CGColorSpaceRef rgbColorSpace = CGColorSpaceCreateDeviceRGB();

uint32_t bitmapInfo = kCGBitmapByteOrder32Little | kCGImageAlphaPremultipliedFirst;

CGContextRef context =

CGBitmapContextCreate(pxdata, renderWidth, renderHeight, 8, renderWidth * 4, rgbColorSpace, bitmapInfo);

CGContextDrawImage(context, CGRectMake(0, 0, renderWidth, renderHeight), [image CGImage]);

CGColorSpaceRelease(rgbColorSpace);

CGContextRelease(context);

}

CVPixelBufferUnlockBaseAddress(buffer, 0);

QBRTCVideoFrame *videoFrame = [[QBRTCVideoFrame alloc] initWithPixelBuffer:buffer videoRotation:QBRTCVideoRotation_0];

[super sendVideoFrame:videoFrame];

}

CVPixelBufferRelease(buffer);

}

});

}

#pragma mark - <QBRTCVideoCapture>

- (void)didSetToVideoTrack:(QBRTCLocalVideoTrack *)videoTrack {

[super didSetToVideoTrack:videoTrack];

self.displayLink = [CADisplayLink displayLinkWithTarget:self selector:@selector(sendPixelBuffer:)];

[self.displayLink addToRunLoop:[NSRunLoop mainRunLoop] forMode:NSRunLoopCommonModes];

self.displayLink.preferredFramesPerSecond = 12; //5 fps

[[NSNotificationCenter defaultCenter] addObserver:self selector:@selector(willEnterForeground:) name:UIApplicationWillEnterForegroundNotification object:nil];

[[NSNotificationCenter defaultCenter] addObserver:self selector:@selector(didEnterBackground:) name:UIApplicationDidEnterBackgroundNotification object:nil];

}

- (void)didRemoveFromVideoTrack:(QBRTCLocalVideoTrack *)videoTrack {

[super didRemoveFromVideoTrack:videoTrack];

self.displayLink.paused = YES;

[self.displayLink removeFromRunLoop:[NSRunLoop mainRunLoop] forMode:NSRunLoopCommonModes];

self.displayLink = nil;

[[NSNotificationCenter defaultCenter] removeObserver:self name:UIApplicationWillEnterForegroundNotification object:nil];

[[NSNotificationCenter defaultCenter] removeObserver:self name:UIApplicationDidEnterBackgroundNotification object:nil];

}

@end

WebRTC stats reporting

Stats reporting is an insanely powerful tool which can help to debug a call if there are any problems with it (for example, lags, missing audio/video etc). To enable stats report you should first set stats reporting frequency using setStatsReportTimeInterval() method below.

QBRTCConfig.setStatsReportTimeInterval(5) // receive stats report every 5 seconds

[QBRTCConfig setStatsReportTimeInterval:5]; // receive stats report every 5 seconds

QBRTCStatsReport instance for the current period of time.

func session(_ session: QBRTCBaseSession, updatedStatsReport report: QBRTCStatsReport, forUserID userID: NSNumber) {

print(report.statsString())

}

- (void)session:(QBRTCBaseSession *)session updatedStatsReport:(QBRTCStatsReport *)report forUserID:(NSNumber *)userID {

NSLog(@"%@", [report statsString]);

}

statsString(), you will receive a generic report string, which will contain the most useful data to debug a call, for example:

CN 565ms | local->local/udp | (s)248Kbps | (r)869Kbps

VS (input) 640x480@30fps | (sent) 640x480@30fps

VS (enc) 279Kbps/260Kbps | (sent) 200Kbps/292Kbps | 8ms | H264

AvgQP (past 30 encoded frames) = 36

VR (recv) 640x480@26fps | (decoded)27 | (output)27fps | 827Kbps/0bps | 4ms

AS 38Kbps | opus

AR 37Kbps | opus | 168ms | (expandrate)0.190002

Packets lost: VS 17 | VR 0 | AS 3 | AR 0

CN Connection info.

*VS Video sent.VR Video received.AvgQP Average quantization parameter (only valid for video; it is calculated as a fraction of the current delta sum over the current delta of encoded frames; low value corresponds with good quality; the range of the value per frame is defined by the codec being used).AS Audio sent.AR Audio received.

audioReceivedOutputLevel for that.

Take a look at the QBRTCStatsReport header file to see all of the other stats properties that can be useful for you.

Calling offline users (CallKit)

Before starting you need to configure APNs and/or VoIP push certificate in your admin panel. Use this guide to add push notifications feature to your QuickBlox application.

Generic push notifications

You can send a regular push notification to users you call, this will notify them about your call (if they have subscribed to push notifications in their app, see Push notifications guide).

let currentUserFullName = QBSession.current.currentUser?.fullName

let text = "\(currentUserFullName) is calling you"

let users = self.session?.opponentsIDs.map({ $0.stringValue }).joined(separator: ",")

QBRequest.sendPush(withText: text, toUsers:users!, successBlock: { (response, event) in

print("Push sent!")

}, errorBlock: { (error) in

print(error)

})

NSString *currentUserFullName = [[[QBSession currentSession] currentUser] fullName];

NSString *text = [NSString stringWithFormat:@"%@ is calling you", currentUserFullName];

NSString *users = [self.session.opponentsIDs componentsJoinedByString:@","];

[QBRequest sendPushWithText:text toUsers:users successBlock:^(QBResponse * _Nonnull response, NSArray<QBMEvent *> * _Nullable events) {

NSLog(@"Push sent!");

} errorBlock:^(QBError * _Nonnull error) {

NSLog(@"Can not send push: %@", error);

}];

Apple CallKit using VoIP push notifications

QuickbloxWebRTC fully supports Apple CallKit. In this block, we will guide you through the most important things you need to know when integrating CallKit into your application. To learn more about this process, review the above-specified link.

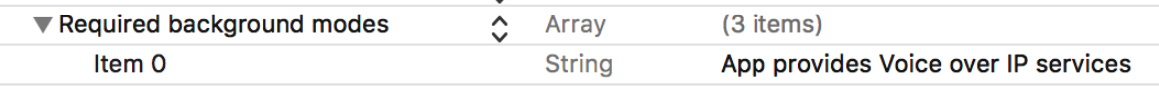

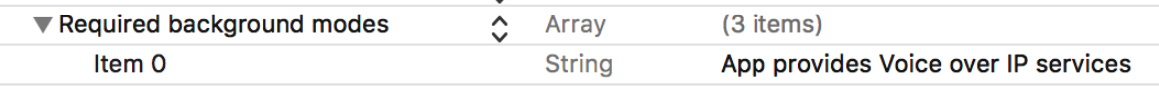

Project preparations

In your Xcode project, make sure that your app supports Voice over IP services. For that open your Info.plist and make sure you have a specific line in Required background modes array:

Now you are ready to integrate CallKit methods using Apple guide here.

Managing audio session

CallKit requires you to manage Audio session by yourself. Use

Now you are ready to integrate CallKit methods using Apple guide here.

Managing audio session

CallKit requires you to manage Audio session by yourself. Use QBRTCAudioSession instance for that task. See Manage audio session section for more information.

Initializing audio session

You must initialize audio session every time before you call the reportNewIncomingCall(with:update:completion:) method of CXProvider showing the incoming call screen. Before initializing the audio session, set useManualAudio property value to YES. This will not activate WebRTC audio before iOS allows it. You will need to activate audio manually later. See Manage audio session section for more information.

Managing audio session activations

CXProviderDelegate has 2 delegate methods that you must conform to:

provider(_:didActivate:)provider(_:didDeactivate:)

Using QBRTCAudioSessionActivationDelegate protocol of QBRTCAudioSession class, you need to notify that the session was activated outside of it. The provider(_:didActivate:) is a CXProviderDelegate where we need to activate our audio manually. Set audioEnabled property of QBRTCAudioSession class in here, to enable WebRTC audio as iOS has pushed audio session priority of our app to the top.

func provider(_ provider: CXProvider, didActivate audioSession: AVAudioSession) {

let callAudioSession = QBRTCAudioSession.instance()

callAudioSession.audioSessionDidActivate(audioSession)

// enabling audio now

callAudioSession.isAudioEnabled = true

}

- (void)provider:(CXProvider *)__unused provider didActivateAudioSession:(AVAudioSession *)audioSession {

QBRTCAudioSession *callAudioSession = [QBRTCAudioSession instance];

[callAudioSession audioSessionDidActivate:audioSession];

// enabling audio now

callAudioSession.audioEnabled = YES;

}

CXProvider deactivates it in provider(_:didDeactivate) of CXProviderDelegate. Deinitializing audio session earlier would lead to issues with the audio session.

func provider(_ provider: CXProvider, didDeactivate audioSession: AVAudioSession) {

if QBRTCAudioSession.instance().isActive == false {

return

}

QBRTCAudioSession.instance().audioSessionDidDeactivate(audioSession)

}

- (void)provider:(CXProvider *)provider didDeactivateAudioSession:(AVAudioSession *)audioSession {

if (QBRTCAudioSession.instance.isActive == NO) {

return;

}

[[QBRTCAudioSession instance] audioSessionDidDeactivate:audioSession];

}

QBRTCAudioSession somewhere else in your app, you can check and ignore it with QBRTCAudioSessionActivationDelegate audioSessionIsActivatedOutside() method. By this, you will know for sure that CallKit is in charge of your audio session. Do not forget to restore QBRTCAudioSession properties to default values in provider(_:perform:) method of CXProviderDelegate.

// The deinitialization code of `QBRTCAudioSession` somewhere else in your app

private func closeCall() {

QBRTCAudioSession.instance().setActive(false)

}

// MARK: - CXProviderDelegate protocol

func provider(_ provider: CXProvider, perform action: CXEndCallAction) {

QBRTCAudioSession.instance().isAudioEnabled = false

QBRTCAudioSession.instance().useManualAudio = false

if (QBRTCAudioSession.instance().isActive) {

QBRTCAudioSession.instance().setActive(false)

}

action.fulfill()

}

// The deinitialization code of `QBRTCAudioSession` somewhere else in your app

- (void)closeCall {

if (QBRTCAudioSession.instance.isActive == NO) { return; }

[QBRTCAudioSession.instance setActive:NO];

}

// MARK: - CXProviderDelegate protocol

- (void)provider:(CXProvider *)__unused provider performEndCallAction:(CXEndCallAction *)action {

QQBRTCAudioSession.instance.audioEnabled = NO;

QBRTCAudioSession.instance.useManualAudio = NO;

[QBRTCAudioSession.instance setActive:NO];

[action fulfill];

}

General settings

You can change different settings for your calls using QBRTCConfig class. All of them are listed below.

Answer time interval

If an opponent hasn’t answered you within an answer time interval, then session(_:userDidNotRespond:) and session(_:connectionClosedForUser:) delegate methods will be called. The answer time interval shows how much time an opponent has to answer your call. Set the answer time interval using the code snippet below.

QBRTCConfig.setAnswerTimeInterval(45)

[QBRTCConfig setAnswerTimeInterval:45];

- By default, the answer time interval is 45 seconds.

- The maximum values is 60 seconds.

- The minimum value is 10 seconds.

Dialing time interval

Dialing time interval indicates how often to notify your opponents about your call. Set the dialing time interval using the code snippet below.

QBRTCConfig.setDialingTimeInterval(5)

[QBRTCConfig setDialingTimeInterval:5];

- By default, the dialing time interval is 5 seconds.

- The minimum value is 3 seconds.

Datagram Transport Layer Security

Datagram Transport Layer Security (DTLS) is used to provide communications privacy for datagram protocols. This fosters a secure signaling channel that cannot be tampered with. In other words, no eavesdropping or message forgery can occur on a DTLS encrypted connection.

QBRTCConfig.setDTLSEnabled(true)

[QBRTCConfig setDTLSEnabled:YES];

By default, DTLS is enabled.

Custom ICE servers

You can customize a list of ICE servers. By default, WebRTC module will use internal ICE servers that are usually enough, but you can always set your own. WebRTC engine will choose the TURN relay with the lowest round-trip time. Thus, setting multiple TURN servers allows your application to scale-up in terms of bandwidth and number of users. Review our Setup guide to learn how to configure custom ICE servers.

You can configure a variety of media settings such as video/audio codecs, camera resolution, etc.

Video codecs

You can choose video codecs from available values:

QBRTCVideoCodecVP8 - VP8 video codecQBRTCVideoCodecH264Baseline - h264 baseline video codecQBRTCVideoCodecH264High - h264 high video codec

VP8 is a software-supported video codec on Apple devices, which means it is the most demanding among all available ones.

H264 is a hardware-supported video codec, which means that it is the most optimal one for use when performing video codec. Using hardware acceleration, you can always guarantee the best performance when encoding and decoding video frames. There are two options available:

- baseline is the most suited one for video calls as it has a low cost (default value).

- high is mainly suited for broadcast to ensure you have the best picture possible. Takes more resources to encode/decode for the same resolution you set.

let mediaStreamConfiguration = QBRTCMediaStreamConfiguration.default()

mediaStreamConfiguration = .h264Baseline

[QBRTCMediaStreamConfiguration defaultConfiguration].videoCodec = QBRTCVideoCodecH264Baseline;

This will set your preferred codec as WebRTC will always choose the most suitable one for both sides in a call through negotiations.

Video quality

Video quality depends on the hardware you use. It also depends on the network you use and how many connections you have. For multi-calls, set lower video quality. For 1 to 1 calls, you can set a higher quality.

You can use our formatsWithPosition() method in order to get all supported formats for a current device.

let cameraPosition:AVCaptureDevice.Position = .front // front or back

let videoFormats = QBRTCCameraCapture.formats(with: cameraPosition) // Array of possible QBRTCVideoFormat video formats for requested device for cameraPosition

AVCaptureDevicePosition cameraPosition = AVCaptureDevicePositionFront; // front or back

NSArray<QBRTCVideoFormat *> *formats = [QBRTCCameraCapture formatsWithPosition:cameraPosition]; // Array of possible QBRTCVideoFormat video formats for requested device for cameraPosition

- If some opponent user does not support h264, then automatically VP8 will be used.

- If both caller and callee have h264 support, then h264 will be used.

Camera resolution

It’s possible to set custom video resolution using QBRTCVideoFormat.

let customVideoFormat: QBRTCVideoFormat = QBRTCVideoFormat.init(width: 950, height: 540, frameRate: 30, pixelFormat: .format420f) // custom video format

let cameraCapture = QBRTCCameraCapture(videoFormat: customVideoFormat, position: cameraPosition)

QBRTCVideoFormat *customVideoFormat = [QBRTCVideoFormat videoFormatWithWidth:950 height:540 frameRate:30 pixelFormat:QBRTCPixelFormat420f]; // custom video format

QBRTCCameraCapture *cameraCapture = [[QBRTCCameraCapture alloc] initWithVideoFormat:customVideoFormat position:cameraPosition];

| Parameters | Description |

|---|

| width | Video width. Default: 640. |

| height | Video hight. Default: 480. |

| frameRate | Video frames per second. Default: 30. |

| pixelFormat | Video pixel format. Default: QBRTCPixelFormat420f |

formats(with:). Set a needed one from the list using the snippet below,

var formats = QBRTCCameraCapture.formats(with: cameraPosition)

NSArray<QBRTCVideoFormat *> *videoFormats = [QBRTCCameraCapture formatsWithPosition:cameraPosition]; // Array of possible QBRTCVideoFormat video formats for requested device

Audio codecs

You can choose audio codecs from available values:

QBRTCAudioCodecOpusQBRTCAudioCodecISACQBRTCAudioCodeciLBC

By default, QBRTCAudioCodecOpus is set.

let mediaStreamConfiguration = QBRTCMediaStreamConfiguration.default()

mediaStreamConfiguration = .codecOpus

[QBRTCMediaStreamConfiguration defaultConfiguration].audioCodec = QBRTCAudioCodecOpus;

- Supported bitrate: constant and variable, from 6 kbit/s to 510 kbit/s.

- Supported sampling rates: from 8 kHz to 48 kHz.

If you develop a Calls application that is supposed to work with high-quality audio, the only choice on audio codecs is OPUS. OPUS has the best quality, but it also requires a good internet connection.

iSAC

This codec was developed specifically for VoIP applications and audio streaming.

- Supported bitrates: adaptive and variable. From 10 kbit/s to 52 kbit/s.

- Supported sampling rates: 32 kHz.

A good choice for the voice data, but not nearly as good as OPUS.

iLBC

This audio codec is well-known. It was released in 2004 and became part of the WebRTC project in 2011 when Google acquired Global IP Solutions (the company that developed iLIBC).

When you have bad connection quality and low bandwidth, you definitely should try iLBC. It should be strong in such cases.

- Supported bitrates: fixed bitrate. 15.2 kbit/s or 13.33 kbit/s

- Supported sampling rate: 8 kHz.

Thus, when you have a strong reliable and good internet connection, then use OPUS. If you use calls on 3g networks, use iSAC. If you still have problems, try iLBC.